See It in Action

Explaining this blog using our AI video generation application, showcasing what our platform can produce.

Jaikumar Mohite

Author · AI Research Contributor

Nariyoshi Sige

Co-Author · 代表取締役 CEO

Explaining this blog using our AI video generation application, showcasing what our platform can produce.

Let's talk about something that's quietly reshaping the world . If you've asked ChatGPT a question, been stunned by an image from DALL-E, or watched an AI-generated video, you've witnessed a kind of magic. But this magic isn't an overnight trick. It's the result of a decades-long technological revolution that is fundamentally changing how we create, communicate, and even think.

This is the AIGC - Artificial Intelligence Generated Content - Revolution.

From the early statistical models of the 1950s to the mind-bending power of today's large language models, the journey has been one of exponential growth. But how did we get here? What are the key breakthroughs, the "aha!" moments, that brought us from simple text generators to AI that can write code, compose music, and design art?

In this comprehensive post, we'll trace that exact history. We'll start with the simple definitions for beginners, journey through the "deep learning" breakthroughs for intermediate readers, and finally, dive into the advanced architectures that power the revolution today.

AIGC, or Artificial Intelligence Generated Content, simply refers to digital content - like images, music, articles, and code - that is created by an AI model rather than by a human.

Think of it as an incredibly skilled apprentice. You provide an instruction (a "prompt"), and the AI uses its vast training to generate something new. The core mission of AIGC is to make content creation faster, more accessible, and more efficient.

This two-step idea isn't new. So, why the sudden explosion? The difference between today's AIGC and older models lies in three key drivers:

The current flagships of this new era are models like ChatGPT (specialized in conversation),

DALL-E 2 (a master artist for text-to-image), and Codex (a programmer that speaks human language).

To understand today's revolution, we have to look back. The history of generative models can be split into two major eras.

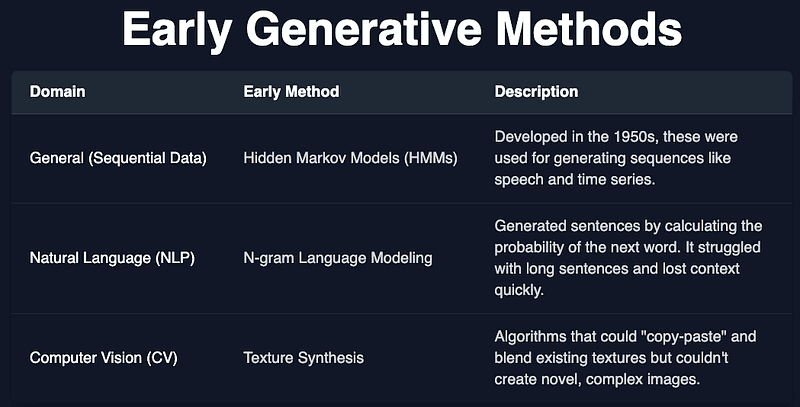

1. Early Foundations (Pre-Deep Learning Era)

Before the 2010s, models focused on generating sequential data, like text or speech. They were clever, but limited.

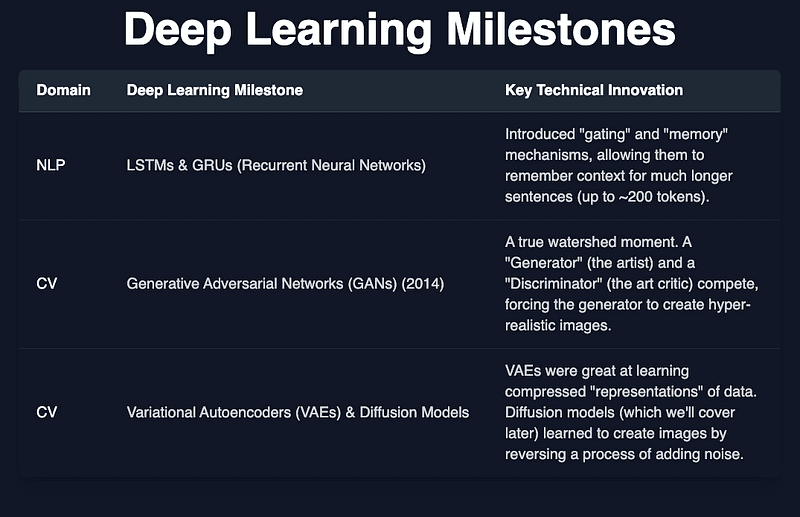

2. The Deep Learning Breakthroughs

This is when things got exciting. With the advent of deep learning, models gained the ability to learn complex patterns on their own.

For years, NLP and CV models evolved on separate paths. Then, in 2017, a paper from Google titled "Attention Is All You Need " introduced the Transformer.

This was the missing piece :-

The Transformer quickly became the dominant backbone for everything: BERT, the GPT series, and even models for computer vision and multimodal tasks.

This brings us to the modern era. Today's AIGC models are all built on the Transformer, but they use it in three different ways.

Two other major innovations are crucial to today's AIGC :-

1. Reinforcement Learning from Human Feedback (RLHF)

2. Diffusion Models This is the cutting-edge technique behind image generators like Stable Diffusion and DALL-E 2.

The model is trained in two steps:

Multimodal AIGC models generate raw modalities by learning complex connections and interactions between different data types

As AIGC becomes ubiquitous, we face critical challenges. This power comes with immense responsibility.

Factuality & Misinformation

Toxicity and Bias

Privacy Vulnerabilities

Reasoning and Reliability

The AIGC revolution is more than just a new set of tools. It's the emergence of a new creative collaborator.

We are moving from an era of information retrieval (like Google) to an era of information synthesis. The challenge ahead is not just to build bigger models, but to build wiser ones. The AIGC revolution has given us a powerful new partner, and our next great task is to learn how to work with it responsibly, ethically, and creatively.

This history is still being written, and the future is far from certain. That leaves us all with critical questions to consider.

We'd love to hear your thoughts in the comments:

As AI becomes a standard tool for art, music, and writing, what do you think it will mean to be a "creative" person in the next decade? Will the most valuable skill shift from the craft of creation to the vision of direction and curation?

When AIGC can generate realistic text, images, and video that are indistinguishable from human-made content, what happens to our concept of "truth" or "authenticity" online? What new systems might we need to verify what is real?